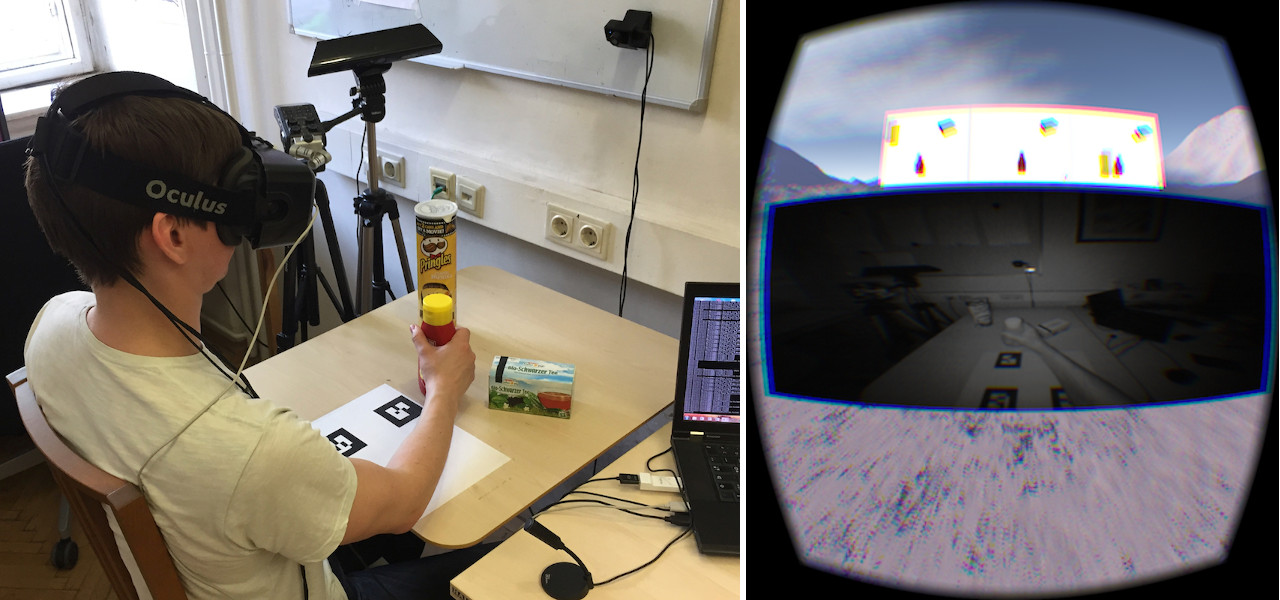

Extension to the Action Verb Corpus (AVCext)

The extension to the Action Verb Corpus consists of 41 recordings conducted by 2 users experienced with the system performing the same three actions as in AVC — take (208 instances), put (208 instances), and push (91 instances). The actions were performed without any instructions. The focus of the extension is to facilitate visual action recognition.

Publications

Details about the collected data can be found in Matthias Hirschmanner, Stephanie Gross, Brigitte Krenn, Friedrich Neubarth, Martin Trapp, Michael Zillich and Markus Vincze: Extension of the Action Verb Corpus for Supervised Learning. ARW 2018.

Authors

- Brigitte Krenn

- Stephanie Gross

- Friedrich Neubarth

- Matthias Hirschmanner

- Michael Zillich

Licence

Sponsors

Human Language Understanding: Grounding Language Learning (HLU), Call 2014 – № 2287-N35

Key facts

- Version

1.0 - Release date

30 April 2018 - DOI

10.5281/zenodo.5140014 - Language

German - Modality

Multimodal - Licence

CC BY 4.0 - Associated projects

RALLI, ATLANTIS - Contact

Brigitte Krenn